Good morning. It’s Monday, May 27th.

Did you know: On this day in 1988, Microsoft released Windows 2.1? We should really bring back that beautiful UI.

In today’s email:

OpenAI Ex-Board Member’s Warning

Elon’s Gigafactory of Compute

Apple’s Potential WWDC Partnerships

Big Tech Agrees on AI Killswitch

5 New AI Tools

Latest AI Research Papers

You read. We listen. Let us know what you think by replying to this email.

In partnership with SEO Blog Generator

Boost your revenue with the SEO Blog Generator

Drive more traffic to your website and increase your revenue with SEO-optimized blog posts, eye-catching images, and automated social media post suggestions.

Key Benefits:

Drive Traffic: Attract more visitors and turn them into customers with perfectly optimized posts that rank higher on search engines.

Meta Titles & Descriptions: Optimize your posts further with automatically generated meta titles and descriptions.

Automatic Image Generation: Enhance your posts with stunning visuals generated automatically.

Social Media Automation: Get tailored post suggestions for Facebook, Twitter, Instagram, and LinkedIn to keep your feeds active and engaging.

Multi-Language: Reach a global audience with multi-language options.

Today’s trending AI news stories

Former OpenAI Board Members: “The company can't be trusted to govern itself.“

In a scathing op-ed for The Economist, former OpenAI board members Helen Toner and Tasha McCauley assert that AI companies cannot effectively self-govern and advocate for third-party regulation. They maintain their decision to remove CEO Sam Altman, citing allegations of creating a "toxic culture of lying" and engaging in behavior commensurate to "psychological abuse," as reported by senior leaders within OpenAI.

Altman's reinstatement, coupled with concerns over safety, including the use of a voice resembling Scarlett Johansson in Chat GPT-4o, has cast doubt on OpenAI's self-regulatory experiment. Toner and McCauley view these developments as a cautionary tale, urging external oversight to prevent a "governance-by-gaffe" approach.

While acknowledging the Department of Homeland Security's recent AI safety initiatives, they express concern about the influence of "profit-driven entities" on policy. Independent regulation, they argue, is the linchpin for ensuring ethical and competitive AI development, free from the undue influence of corporate interests. Read more.

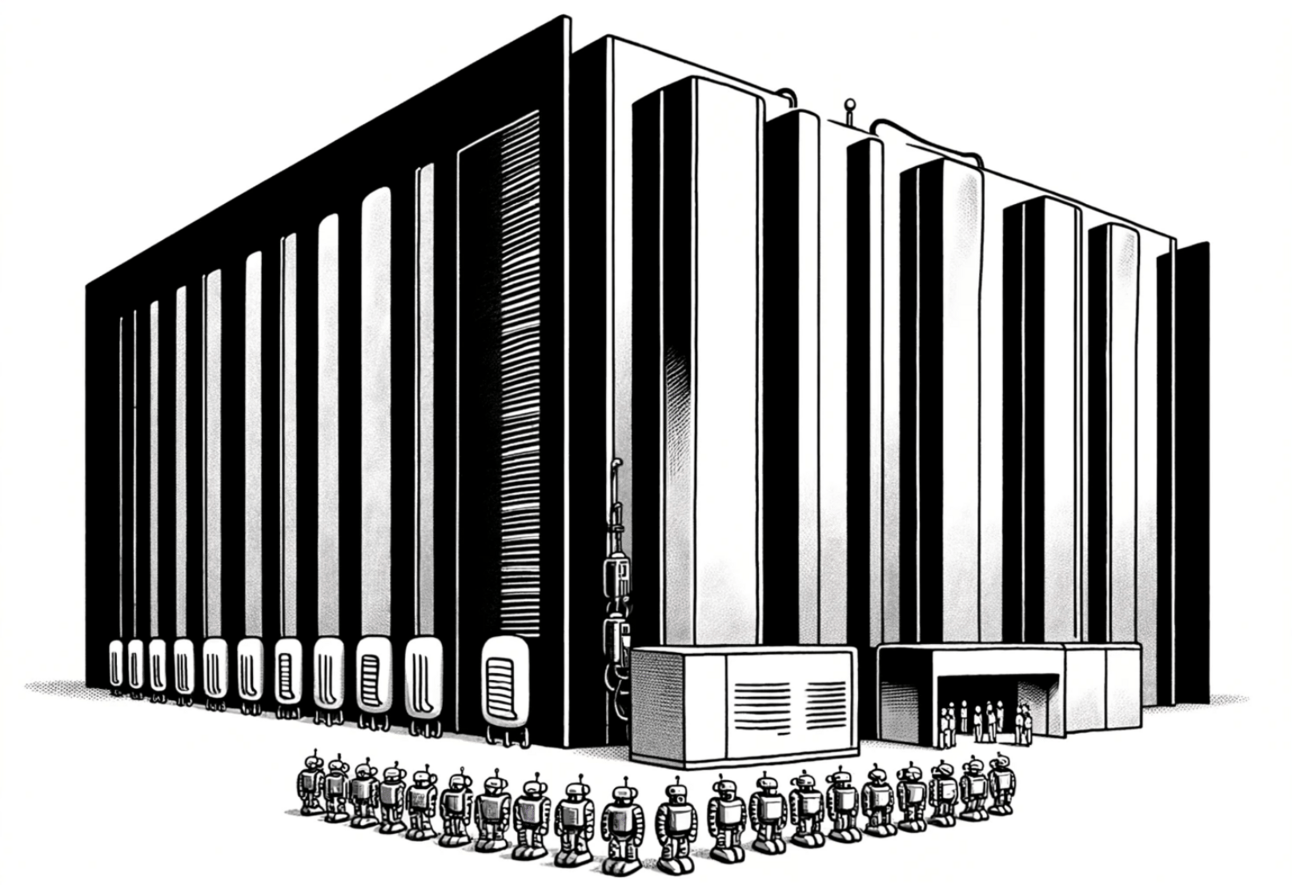

Elon Musk's xAI Plans "Gigafactory of Compute" to Dwarf Meta's Massive GPU Clusters

Elon Musk's xAI is developing an ambitious "Gigafactory of Compute" – a supercomputer surpassing competitors like Meta. Targeted for fall 2025, this Nvidia H100 GPU-powered behemoth is projected to be a quadrupled champion in processing power.

While details on its undisclosed location remain under wraps, Musk personally ensures its timely completion. Potential collaboration with Oracle suggests the platform will support xAI's Grok chatbot on a yet-to-be-revealed "X" platform.

Musk foresees AI outpacing human intelligence by next year with ample computing power. Microsoft and OpenAI also plan a $100 billion supercomputer, "Stargate," aiming for full development by 2030, contingent upon significant AI research progress. Read more.

Related story: Elon Musk’s xAI raises $6 billion

Apple’s WWDC may include AI-generated emoji and an OpenAI partnership

Mark Gurman's Bloomberg report suggests WWDC 2024 might witness an uptick in Apple's "applied intelligence" initiatives. This could include AI-powered emoji generation and deeper integration of on-device functionalities like voice memo transcription.

While the rumored partnership with OpenAI remains unconfirmed, whispers hint at advancements in chatbot technology. Local processing will likely take precedence, with M2 Ultra servers handling computationally intensive tasks. Additionally, expect refinements to Siri and potential feature extensions utilizing Apple's large language models.

The rumored ability to customize app icons and layouts in iOS 18 further underscores a user-centric approach. Overall, the focus appears to be on leveraging AI for practical enhancements across the Apple ecosystem. Read more.

Tech companies have agreed to an AI ‘kill switch’ to prevent Terminator-style risks

Tech companies, including Anthropic, Microsoft, and OpenAI, alongside 10 countries and the EU, have committed to responsible AI development by agreeing to implement an AI 'kill switch.' This policy aims to halt the advancement of their most sophisticated AI models if they cross certain risk thresholds.

However, the effectiveness of this measure is uncertain, lacking legal enforcement or specific risk criteria. The agreement, signed at a summit in Seoul, follows previous gatherings like the Bletchley Park AI Safety Summit, criticized for its lack of actionable commitments. Concerns about AI development's potential adverse effects, reminiscent of sci-fi scenarios like the Terminator, have prompted calls for regulatory frameworks. While individual governments have taken steps, global initiatives have been largely non-binding. Read more.

Who will make AlphaFold3 open source? Scientists race to crack AI model: Following the release of DeepMind's AlphaFold3 in Nature, a "gold rush" for open-source alternatives has begun. The lack of accompanying code, while prompting community concerns (including a delayed release promise from DeepMind), has spurred initiatives like Columbia's "OpenFold" project. Transparency remains a priority, echoing Nature's code-sharing policies. While DeepMind's future release details are unclear, particularly regarding protein-drug interactions, open-source versions offer the potential for retraining and improved performance – crucial for pharmaceutical applications. Scientists like David Baker and Phil Wang seek insights from AlphaFold3 for their models. Hacked versions already emerge, indicating the demand for accessibility and transparency in AI tool development. Read more.

Google scrambles to manually remove weird AI answers in search: Google is facing challenges with its AI Overview product, which has been generating bizarre responses like suggesting users put glue on pizza or eat rocks. The company is manually removing these strange answers as they appear on social media, indicating a hasty response to the issue. Despite being in beta since May 2023 and processing over a billion queries, the product's rollout has been marred by unexpected outputs. Google claims to be '“swiftly addressing the problem” and is using instances of weird responses to enhance its systems. Read more.

Etcetera: Stories you may have missed

5 new AI-powered tools from around the web

Invisibility integrates advanced AI models (GPT-4o, Claude 3 Opus, Gemini, Llama 3) for Mac, offering seamless multitasking via a simple keyboard shortcut.

HyperCrawl offers zero-latency web crawling optimized for retrieval-based LLM development, enhancing data gathering and efficiency in AI research projects.

Forloop.ai is a no-code platform for web scraping and data automation, enabling rapid data gathering, preparation, and process automation for teams.

PitchFlow auto-generates pitch decks in one minute with startup inputs, allowing entrepreneurs to focus on product development and user engagement.

Zycus Generative AI boosts Source-to-Pay (S2P) productivity by 10x, enhancing efficiency, cost savings, and risk management in procurement processes.

arXiv is a free online library where researchers share pre-publication papers.

📄 Grokked Transformers are Implicit Reasoners: A Mechanistic Journey to the Edge of Generalization

Thank you for reading today’s edition.

Your feedback is valuable. Respond to this email and tell us how you think we could add more value to this newsletter.

Interested in reaching smart readers like you? To become an AI Breakfast sponsor, apply here.