Good morning. It’s Monday, February 9th.

On this day in tech history: In 2010, Google Buzz buzzed onto the scene, a short-lived social layer in Gmail for real-time sharing. Though flop, it harvested interaction data that fed Google's ML evolution, from ranking algos to BERT precursors. Its location-based "nearby" feature hinted at geospatial AI in Maps.

In today’s email:

Google's PaperBanana uses five AI agents to auto-generate scientific diagrams

Claude ‘Fast Mode’ hikes speed with a 6x price surge over standard

Did OpenAI’s hardware product “Dime” ad leak?

5 New AI Tools

Latest AI Research Papers

You read. We listen. Let us know what you think by replying to this email.

Better prompts. Better AI output.

AI gets smarter when your input is complete. Wispr Flow helps you think out loud and capture full context by voice, then turns that speech into a clean, structured prompt you can paste into ChatGPT, Claude, or any assistant. No more chopping up thoughts into typed paragraphs. Preserve constraints, examples, edge cases, and tone by speaking them once. The result is faster iteration, more precise outputs, and less time re-prompting. Try Wispr Flow for AI or see a 30-second demo.

Today’s trending AI news stories

Google's PaperBanana uses five AI agents to auto-generate scientific diagrams

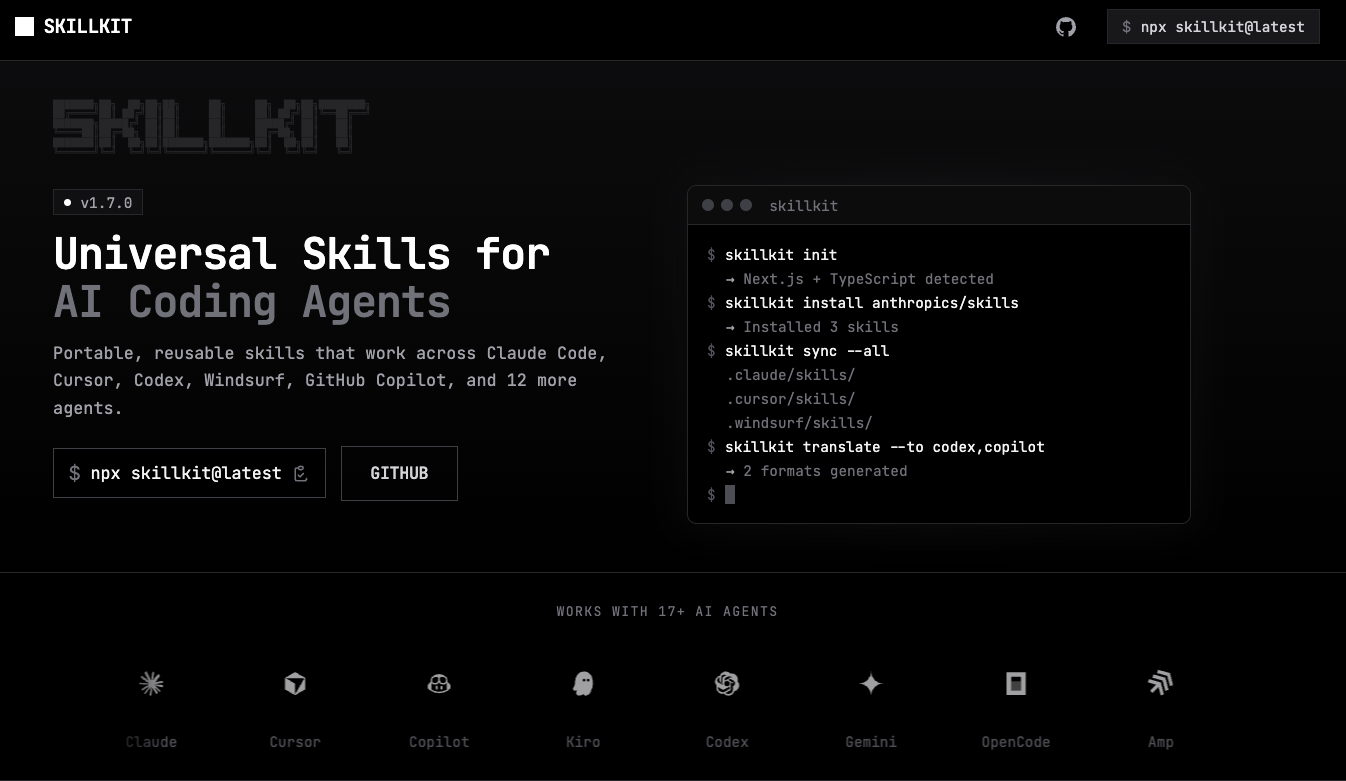

Scientific diagrams are usually a manual bottleneck, but Google’s PaperBanana now changes that. Developed by Peking University and Google, this framework uses five specialized AI agents to build publication-ready figures. This includes a Retriever, Planner, Stylist, Visualizer, and Critic work together to refine every image.

For charts, it writes and runs Matplotlib code instead of just drawing them, making sure the math is perfect. Reviewers preferred these diagrams 73% of the time, though the AI still occasionally struggles with complex line alignments.

Since 2016, we’ve been working towards the transition to PQC, focusing on “crypto agility,” updating or replacing cryptographic algorithms without disrupting services.

As we rely more on AI, Google is also warning about Quantum Computers that could eventually break today’s encryption. To stop "store now, decrypt later" attacks, Google is moving its systems to Post-Quantum Cryptography (PQC).

Google’s new protocols, like ML-KEM, can be updated without any service downtime and its Willow chip recently proved it can correct its own errors, accelerating the need for these new security standards by 2026. Google recommends a "cloud-first" approach for governments, as cloud providers can update security much faster than old on-premise servers. Read more.

Claude ‘Fast Mode’ hikes speed with a 6x price surge over standard

Anthropic has introduced a high-speed infrastructure tier for its flagship model, Claude Opus 4.6. Dubbed Fast Mode, the new feature offers a 2.5x reduction in response times but carries a steep 6x price markup over standard rates. This tier is engineered for synchronous, high-stakes workflows like live debugging where every second of compute counts.

The Cost of Performance. Standard input rates of $5 per million tokens jump to $30 in Fast Mode, while output costs climb from $25 to $150. These costs scale further with the model’s expanded 1,000,000-token context window; once an input exceeds 200,000 tokens, the base rates double. This means that after the introductory discount ends on February 16, processing deep-context queries at high speeds will cost a massive $60 per million input tokens and $225 per million output tokens. Currently, this mode is restricted to Anthropic’s native environment via Claude Code and API, excluding cloud providers like Google Vertex or Azure.

While the pricing reflects a luxury API market, the underlying model remains a powerhouse, recently claiming the top spot on the Artificial Analysis Intelligence Index. Its autonomous reasoning was highlighted by the discovery of over 500 zero-day vulnerabilities in open-source libraries like GhostScript and CGIF. This capability is already being utilized by Goldman Sachs, where embedded Anthropic engineers are using Claude to automate complex trade reconciliation and compliance roles.

This tension between high-cost automation and human oversight was a central theme for Anthropic this week. President Daniela Amodei noted that as AI commoditizes STEM skills, "studying the humanities will be more important than ever." This stance even forced a last-minute change to Anthropic’s Super Bowl ad after Sam Altman called their original copy (linked above) "clearly dishonest." Anthropic pivoted from the aggressive "Ads are coming to AI. But not to Claude" to the more neutral: "There is a time and place for ads. Your conversations with AI should not be one of them." Read more.

Did OpenAI’s hardware product “Dime” ad leak?

A supposed leaked rough cut of OpenAI's Super Bowl LX advertisement, shared by a disgruntled employee on Reddit, has ignited fresh controversy in the intensifying AI rivalry with Anthropic. The provocative early version featured unsettling imagery—including a man handling a metallic, suppository-like object—paired with ominous text overlays such as "dime" and "almost time," apparently symbolizing the invasive, transformative creep of artificial intelligence into everyday life.

At the same time, the company is in talks with Abu Dhabi’s G42 to build a localized version of ChatGPT for the UAE. This version will use post-training layers to follow the kingdom's social laws, which include prohibiting LGBTQ+ content. It marks a new era of "sovereign AI" where models are programmatically tuned to fit regional speech restrictions and cultural outlooks.

CEO Sam Altman is also describing the end of the traditional SaaS era. Altman expects AI agents to soon write their own code to access services even if an official API doesn't exist. "Every company is an API company now, whether they want to be or not," Altman noted, suggesting that the traditional user interface will lose value as agents interact directly with core systems.

OpenAI researcher Noam Brown backed this rapid pace, dismissing claims that AI progress is hitting a wall. He pointed to the release of GPT-5.3-Codex, which arrived just two months after GPT-5.2 and is twice as token-efficient for coding. Brown believes that by the end of 2026, standard evaluation benchmarks will have a hard time measuring the long, multi-hour tasks these models are becoming capable of handling. Read more.

The CRM that saves teams hours every week

HubSpot Smart CRM learns how your team works and adapts to help everyone perform better, which means you'll spot who needs support, celebrate wins instantly, and track what actually matters. The result? You get back hours every week to focus on growth instead of admin work. Start free today.

5 new AI-powered tools from around the web

arXiv is a free online library where researchers share pre-publication papers.

Thank you for reading today’s edition.

Your feedback is valuable. Respond to this email and tell us how you think we could add more value to this newsletter.

Interested in reaching smart readers like you? To become an AI Breakfast sponsor, reply to this email or DM us on 𝕏!