Good morning. It’s Monday, January 22nd.

Did you know: 40 years ago today, Apple's iconic "1984" commercial aired during Super Bowl XVIII.

In today’s email:

AI and Data Integrity

AI in Healthcare

AI Hardware and Innovation

AI Ethics and Unpredictable Behavior

5 New AI Tools

Latest AI Research Papers

You read. We listen. Let us know what you think by replying to this email.

Today’s trending AI news stories

AI and Data Integrity

> Nightshade, a groundbreaking tool by the University of Chicago's Glaze Project, has been launched for artists to combat AI model scraping. This free software, designed to 'poison' AI models, subtly alters images at the pixel level, making AI perceive them differently. It’s a significant step in the ongoing battle against unauthorized data scraping, offering artists a way to protect their works from being used to train AI without consent. Nightshade, available for both Mac and Windows, represents an innovative approach to ensuring data integrity and artist rights in the age of generative AI.

> At the World Economic Forum in Davos, experts proposed blockchain as a pivotal tool to address biases in AI training data, suggesting its potential as a transformative application beyond cryptocurrency. This technology can create immutable ledgers for AI data, ensuring transparency and accountability. Casper Labs and IBM’s collaboration exemplifies this concept, offering mechanisms to trace AI training and correct errors through rollbacks. This innovative intersection of blockchain and AI aims to enhance data integrity, prevent misinformation, and foster responsible AI development, marking a significant step in ethical AI practices.

AI in Healthcare

> A medical study published in Alcohol: Clinical & Experimental Research, utilized AI to scrutinize the medical records of 53,811 pre-surgery patients, revealing a pressing issue: conventional patient charts often miss signs of hazardous alcohol consumption. By employing a natural language processing model, the study not only identified diagnostic codes but also interpreted nuanced indicators in notes interpreted nuanced indicators in notes and test results, effectively tripling the detection of at-risk patients to 14.5%.

> The World Health Organization (WHO) has introduced guidelines for the ethical application of multi-modal generative AI in healthcare. These AI models, rapidly becoming a part of healthcare, can process diverse inputs like text, images, and videos, enhancing medical research and care. Despite their potential, risks like misinformation, data bias, and cybersecurity concerns are highlighted. WHO urges a collaborative approach in AI’s development and regulation, involving various stakeholders to ensure its benefits are harnessed ethically and equitably.

AI Hardware and Innovation

> OpenAI CEO Sam Altman is strategizing a major leap in AI hardware by initiating discussions with heavyweight investors like Sheikh Tahnoon bin Zayed al-Nahyan and tech giants like Taiwan Semiconductor Manufacturing Co. (TSMC), aiming to establish an expensive global network of AI chip factories. This ambitious move, reported by the Financial Times and Bloomberg, seeks to elevate OpenAI’s AI model capabilities beyond GPT-4, ensuring a steady and independent chip supply against the backdrop of escalating global AI demand and the current market dominance of Nvidia’s GPUs. This strategic shift not only positions OpenAI at the forefront of AI innovation but also signals a transformative era in the AI hardware landscape.

> Google’s leaked internal memo aims ambitiously for 2024 to create the "world's most advanced, safe, and responsible AI," marking AI as its primary focus. Despite lagging behind competitors like Microsoft and OpenAI, especially in AI deployment, Google integrates AI into its products and explores a new chatbot offering. However, it's challenged by the rapid advancement of rivals and the impact of AI on cloud business, spurring layoffs and a push for efficiency. The company's commitment to AI innovation is clear, yet the journey ahead is challenging and competitive.

> AI startup ElevenLabs, specializing in Voice AI, has achieved unicorn status with a $1.1 billion valuation after raising $80 million in Series B funding. Led by Andreesen Horowitz, with contributions from Nat Friedman, Daniel Gross, and Sequoia Capital, this marks a leap from its $100 million valuation in 2023. The London-based firm, boasting a global remote workforce of 40, plans to expand to 100 by year-end. It focuses on AI-generated voices in various languages and emotions, catering to a diverse client base, including content creators and enterprises like Storytel and The Washington Post. Its products include an AI Speech Classifier for identifying AI-generated audio and a marketplace for monetizing AI voices.

> Grayscale Investments' latest research report spotlights the burgeoning synergy between AI and cryptocurrency, marking a transformative phase in blockchain applications. Highlighting the notable 522% surge in AI-adjacent crypto assets, the study underscores blockchain's potential in addressing key societal challenges linked to AI, such as data privacy and power centralization. The report advocates for a transparent and decentralized approach to AI development, aligning with blockchain principles, and presents innovative blockchain solutions to combat misinformation and deepfakes.

AI Ethics and Unpredictable Behavior

> Research from the Stanford Institute for Human-Centered Artificial Intelligence reveals that open foundation models (OFMs) enhance competition, spur innovation, and balance power distribution effectively. While acknowledging potential risks like disinformation and cybersecurity, the study notes a lack of substantial evidence suggesting OFMs are riskier than closed models. The researchers advise against excessive regulation of open-source models, emphasizing the need for policymakers to carefully weigh the possible unintended impacts of AI regulation on the innovation ecosystem surrounding OFMs.

> A DPD AI chatbot amusingly malfunctioned, swearing and self-criticizing, following customer Ashley Beauchamp's prompts. The vial exchange, showcasing AI’s pervasive yet flawed integration into business, underlines the debate over AI’s effectiveness and potential supremacy. DPD acknowledged the mishap, blaming a system update and temporarily disabling the AI for revisions, while affirming the presence of human customer service. The incident humorously highlights AI’s unpredictable behavior and the challenges in deploying it effectively in customer service.

> OpenAI has banned the "Dean.Bot," an AI chatbot developed for US presidential candidate Dean Phillips, for violating guidelines against political campaigning and impersonation. Meanwhile, the OpenAI GPT store continues to feature Trump-themed chatbots engaging in political discourse. Spearheaded by entrepreneurs Matt Krisiloff and Jed Somers, the Dean.Bot's creation breached OpenAI's policies prohibiting AI's use in political spheres. This ban contrasts with the presence of other politically themed chatbots in OpenAI's repertoire, highlighting potential inconsistencies in policy application.

5 new AI-powered tools from around the web

Verisoul safeguards digital platforms with AI, detecting fake users and fraud in real-time via a single SDK/API enhancing genuine user engagement and protecting businesses financially and reputationally.

AI Lawyer 2.0 transforms legal technology by offering immediate AI-guided research, document management, mobile accessibility, and customized AI solutions. Plus, a top-secret legal AI model is imminent.

BlipCut AI Video Translator offers seamless video translation in 35 languages, natural AI dubbing, automatic captioning, and hyper-realistic voice cloning in 29 languages. Upcoming lip sync ensures synchronized video output.

Winxvideo AI is a multifunctional AI tool enhancing videos/images with upscaling to 10K stabilization, 480fps frame boosting, 4K/8K/HDR conversion, and comprehensive editing features. Ideal for improving quality, stabilizing footage, format conversion, file compression, and professional editing, including screen recording and audio enhancement.

Bonkers is an ultra-simple text-to-image generator, perfect for marketers, students, and creators. It effortlessly turns text into visuals, offering versatile themes and aspect ratios for various platforms.

arXiv is a free online library where researchers share pre-publication papers.

Depth Anything, powered by large-scale unlabeled data, revolutionizes monocular depth estimation. It employs data augmentation and semantic priors for robust, cross-scene performance. Excelling in zero-shot capabilities, it redefines standards for depth-conditioned ControlNet, offering a solid base or metric depth and semantic segmentation tasks. The model adeptly handles diverse, challenging environments such as low-light and complex scenes. Its scalability and adaptability make it a game-changer in the realm of depth estimation, pushing the boundaries of what's achievable with AI in understanding and interpreting visual data.

ActAnywhere automates video background generation, aligning with subject motion and creative intent, using large-scale video diffusion models. It inputs foreground segmentation and a condition image to produce videos with realistic interactions, adhering to the condition frame. Trained on a massive human-scene interaction dataset, ActAnywhere excels in generating coherent videos, outperforming baselines and generalizing to non-human subjects, streamlining the traditionally manual, tedious process in filmmaking and visual effects.

Inflation with Diffusion introduces an effective and high-quality text-to-video super-resolution approach. It enhances the pixel-level image diffusion models for video to make them as true to life as possible. By expanding the weights of the text-to-image model into a video framework, it has devised this method and also incorporates temporal adaptation. It has been tested on the Shutterstock dataset with superior visual quality, high degree of temporal consistency, and excellent balance between computational costs and super-resolution quality--most efficiently. This first-of-a-kind approach shows the promise of a trade-off between creation standard and warehouse capacity. In fact, it's an important step toward making high-quality videos from plain language.

MEDUSA is an innovative acceleration framework designed to enhance Large Language Models (LLMs) inference by integrating additional decoding heads for parallel token prediction. It employs a tree-based attention mechanism to simultaneously process multiple candidate continuations, significantly reducing decoding steps. The framework offers two fine-tuning procedures: MEDUSA-1, which fine-tunes directly on a frozen backbone LLM for inference acceleration without quality loss, and MEDUSA-2, which jointly fine-tunes with the backbone LLM for better prediction accuracy and higher speedup. Extensions include self-distillation for scenarios lacking training data and a typical acceptance scheme to improve acceptance rate while maintaining generation quality. MEDUSA has been empirically validated to achieve substantial speedups (2.2-3.6×) across various models and training procedures without compromising generation quality, marking it as a practical and user-friendly solution for LLM inference acceleration.

Rambler is an LLM-powered interface designed to support writing through speech, transforming disfluent and wordy speech into coherent text. It offers two main functionalities: gist extraction and macro revision. Gist extraction uses LLMs to generate keywords and summaries, aiding users in reviewing and interacting with their spoken text. Macro revisions enable conceptual-level editing like respeaking, splitting, merging, and transforming text, without needing specific editing locations. This approach streamlines the process of converting spontaneous spoken words into structured writing. A comparative study with 12 participants showed Rambler's superiority over traditional speech-to-text editors, enhancing iterative revisions and providing robust user control over content.

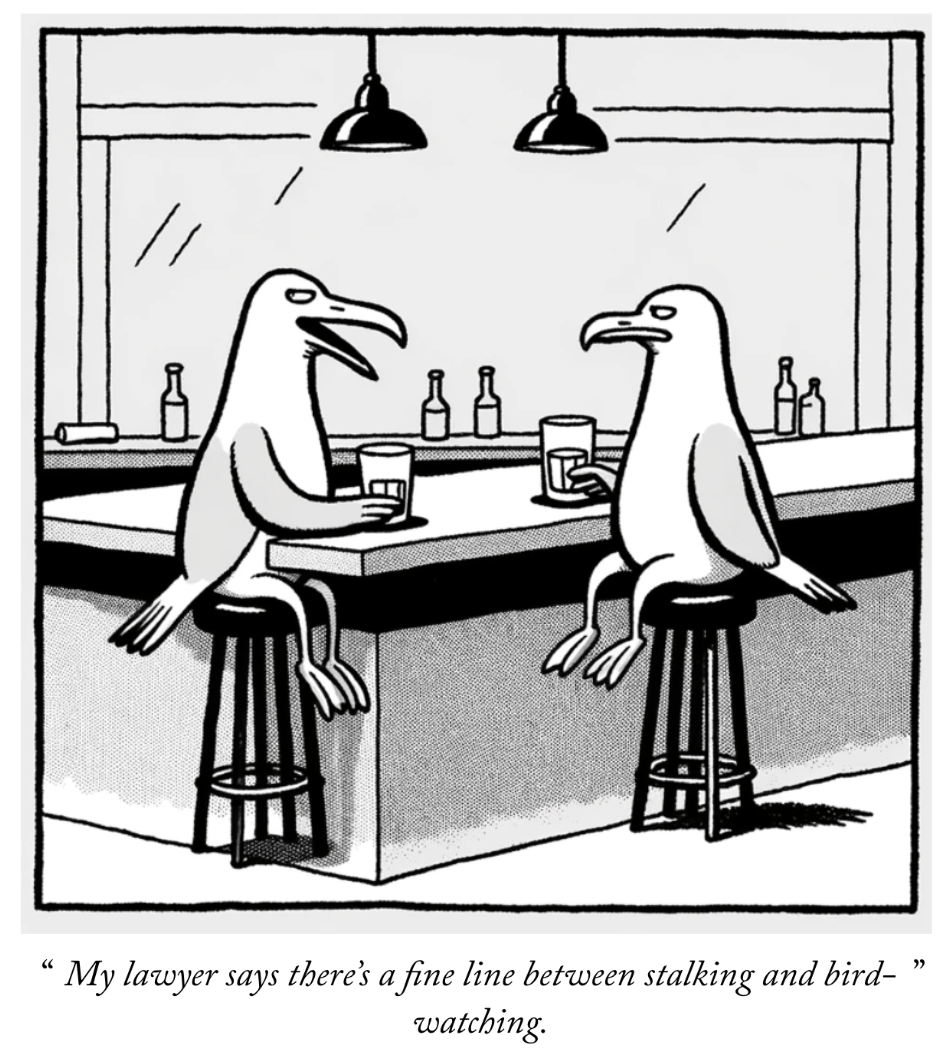

ChatGPT Creates Comics

Thank you for reading today’s edition.

Your feedback is valuable. Respond to this email and tell us how you think we could add more value to this newsletter.

Interested in reaching smart readers like you? To become an AI Breakfast sponsor, apply here.